3D Programming with JavaScript

I needed to develop a 3D interface for simulating a two player version of PacMan. I also enjoy experimenting with different tools for game development, and so I decided to look into developing 3D applications for the web.

This article explains some of the concepts that will be useful when learning 3D Programming with JavaScript. It also contains some information about popular JavaScript based 3D and physics APIs that can ease the development process and let the developer work on ideas and not technical plumbing.

Modern Browsers

Modern browsers have evolved drastically. Browsers were initially only capable of rendering HTML and submitting forms, they were later enhanced to enable developers to load parts of the page asynchronously. The demand for more interactive applications on the web grew and led to the development and use of browser plugins like Flash. With the demand for interactive applications and compatibility on different platforms like mobile devices, browsers needed something native to allow developers the freedom to develop applications across many platforms. This is the creation of <canvas>.

The canvas element in HTML is simply an element that allows drawing of shapes on the page.

To accommodate different drawing needs, the canvas provides two different contexts:

2D Context

The 2D context allows for drawing simple shapes in a step by step manner. Drawing shapes involves setting the brush properties before drawing a specific shape. The 2D context makes it easy to draw text, lines, arcs, rectangles, etc.

3D Context

The 3D context is in the form of the WebGL API. WebGL allows for drawing any 3D geometry from any perspective or point of view. This allows for more complex shapes and geometries to be drawn.

Web Browsers

Most modern browsers as well as their mobile version counterparts support WebGL. These include, Google Chrome, Safari, Firefox, Opera, and even latest versions of Internet Explorer. This is great for diverse distribution of your application.

WebGL

WebGL means “Web Graphics Language”. WebGL uses the canvas element to render drawings, just like the 2D context. WebGL is a JavaScript exclusive API, this is great because it works on a variety of different operating systems and browsers. WebGL is based on the OpenGL Embedded Systems 2.0 specification, due to this, it has support for general mobile device hardware. Best of all, WebGL is royalty free, there is no licence required to use the API.

Hardware Acceleration

WebGL leverages off the GPU (Graphics Processing Unit) for hardware acceleration. GPUs are geared towards graphics calculations and rendering. The aim is to calculate and render complex graphics as efficiently as possible – this is not a job for the CPU.

What’s in the box?

3D rendering consists of two main concepts, the Vertex Shader, and the Fragment Shader. This might sound complicated, but in essence:

- The Vertex Shader is a position calculator. It handles the mathematics and calculations for converting points so that they are positioned correctly.

- The Fragment Shader is a colour chooser. It determines what colour different elements in the 3D space should be.

Coding with Raw WebGL

WebGL is a API and has brought amazing capabilities to modern browsers. As with any raw 3D development, there are some pains.

- WebGL has many settings and configurations. One needs to understand what settings are required, how they are used, and what the correct setting should be. These are typically settings and configurations for the Vertex Shader and Fragment Shader.

- Rendering simple 3D shapes with WebGL can be cumbersome due to the amount of code required. WebGL expects the developer to provide all the vertices of geometries (Creating a cube involves approximately 112 lines of code). Developing and debugging this can potentially be a nightmare.

- Too much plumbing and mathematics, not enough fun. We all want to see our ideas come to life, and not be bogged down by details.

These pains can be avoided by using a 3D library like three.js, but before jumping straight into it, there should be a way to create and maintain a standard JavaScript project.

Standardised JavaScript Projects

When starting out with using a new technology, learning a new language, or building any project in general, it’s good to have a basic template to work from. Usually the template will take care of any settings that the project requires to run, managing dependencies, managing builds, etc.

When working with JavaScript, there are an abundance of tools that will be useful in developing a project.

Yeoman

Yeoman is a great tool for generating a standard JavaScript project. Yeoman includes generators that will provide you with a boilerplate project for most popular JavaScript frameworks like Node.js, Angular.js, and many more. Yeoman generates directory and file structures with default Grunt and Bower configurations for the respective project.

Grunt

Grunt is used to build and check your project for correctness in terms of syntax and the general semantics of JavaScript. Grunt will check your JavaScript files in realtime and notify you of any issues with your code as they happen. Grunt also has a nifty lightweight HTTP server that can be used to live deploy projects and test them during development.

Bower

Bower is a dependency management tool, it allows for dependencies to be imported and included in a project automatically. With bower, there is no need to download 3rd party dependencies manually.

Setting Up a Three.js Project

The following commands will work on any UNIX system.

Install Yeoman

npm install -g yo

Install the Three.js generator

npm install -g generator-threejs

Make a new directory for your project

mkdir threejs-project

Navigate to your new project directory

cd threejs-project

Generate a Three.js project with Yeoman

yo threejs

Deploy your project with Grunt

grunt serve

3D – THREE.JS

Three.js is a cross-browser JavaScript API for 3D programming. It allows developers to create 3D scenes and applications with ease. Three.js simplifies 3D programming by providing simple operations for common tasks. Three.js handles all the mathematics and basic 3D setup and configurations for the developer.

With raw WebGL, it takes around 112 lines of code to create a simple cube. Three.js allows for this to be done in a single line of code.

There are a few concepts related to Three.js and 3D Programming in general that should be understood before embarking on a project.

Scene

The scene is a container for 3D objects. The scene will hold everything that is in the 3D world. This includes any object in the 3D world.

Camera

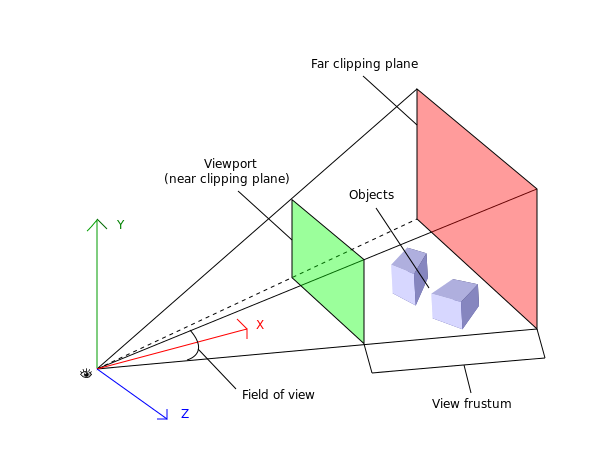

The camera is an object that cannot be seen be the user. The scene is displayed to the user from the perspective of the camera.

Controls

Controls could be mouse, keyboard, touch events, gamepads, etc. Controls can be used to move and manipulate the camera or any other object in the scene.

Objects

Objects are 3D entities in the scene. These can be something as simple as a cube, to something as complex as 3D humanoids, vehicles, buildings, etc. It’s great that we can create objects, but what are they made up of?

- Object Geometry: The geometry is the shape of the object. This includes all the points that make up the object as well as their positions relative to each other.

- Object Texture: The texture is typically an image that that is overlaid over the geometry. It gives the shape any aesthetics and any effects that is required. It is the skin for the geometry.

Renderer

The renderer is responsible for assembling the scene and it’s objects, and displaying it to the user from the perspective of the camera.

Physics – Cannon.js

When developing 3D applications, more often than not, there is a requirement for physics, gravity, or collision detection.

It is tedious and difficult to write custom collision detection and physics code, again, we want more fun and less mathematics and plumbing.

Cannon.js is a useful API that is compatible with Three.js for physics. Cannon allows developers to bind to existing Three.js 3D objects and perform physics calculations and manipulations on them.This is useful for simulating gravity and creating worlds where collisions actually result in an effect instead of objects overlaying each other.

Uses and Advantages

3D Programming with JavaScript has many uses, here’s just a few.

- Great for game development.

- Interactive applications.

- Simulations.

- Write once, run almost anywhere.