The Turing test and sci-fi

The Turing test was created by Alan Turing in the 1950s to examine a machine's ability to exhibit human-level intelligent behaviour. He called it the imitation game. Here's what it's all about.

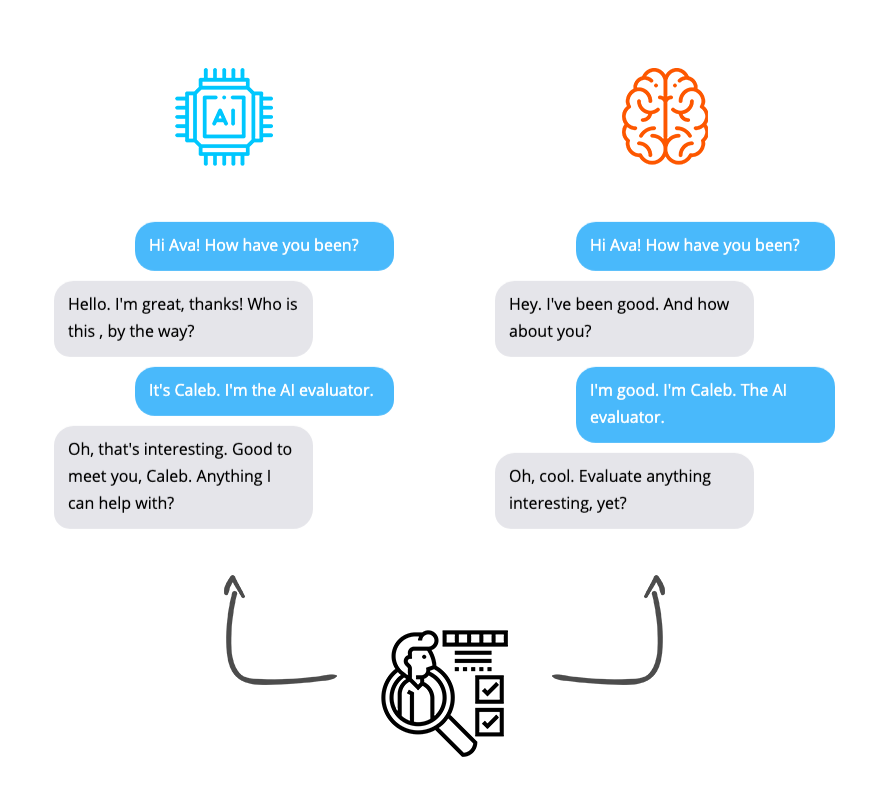

The test involves a human evaluator exchanging conversations with two entities. One would be another human, and the other would be a machine. If the human evaluator cannot distinguish the human from the machine, then the machine would have passed the Turing test.

The original Turing test proposed that the conversation happens through text channels to avoid the machine having to generate spoken words or look like a person. Given our significant technology advancements, this may be an obsolete constraint going forward.

In the movie Interstellar, we meet the oddly shaped robot, TARS; characterised by its sarcastic yet dependable personality. TARS is a great example of something that can create a divide in the Turing test. TARS has convincing speech and autonomy, yet we can see that it's a robot.

In the movie Her, Theodore falls in love with a robot voice assistant, Samantha. Sam displays uniqueness, care, and is always adapting and learning - much like a person. To his dismay, Theodore later finds that Samantha has been in love with thousands of people simultaneously.

In Ex Machina, a tech company's CEO invites Caleb, a contest winner, to his secluded home to perform an extreme Turing test on his latest humanoid robot, Ava. Ava convinces Caleb of its humanity and coordinates an escape. Only to doom both men as it escapes and joins society.

These are some extreme examples of "intelligent" conversation machines from the world of sci-fi, but they create great thought experiments to challenge our definition of intelligence, artificial intelligence, and the ethical implications of the things we build.

Although the Turing test examines the ability of a machine to mimic a human's behaviour in a conversation, it doesn't mean that the machine can think like a human thinks. It doesn't mean that the machine understands the consequences of it's words.

If you enjoyed this thread, check out my book, Grokking Artificial Intelligence Algorithms: http://bit.ly/gaia-book, consider following me for more, or join my mailing list for infrequent knowledge drops in your inbox: https://rhurbans.com/subscribe.